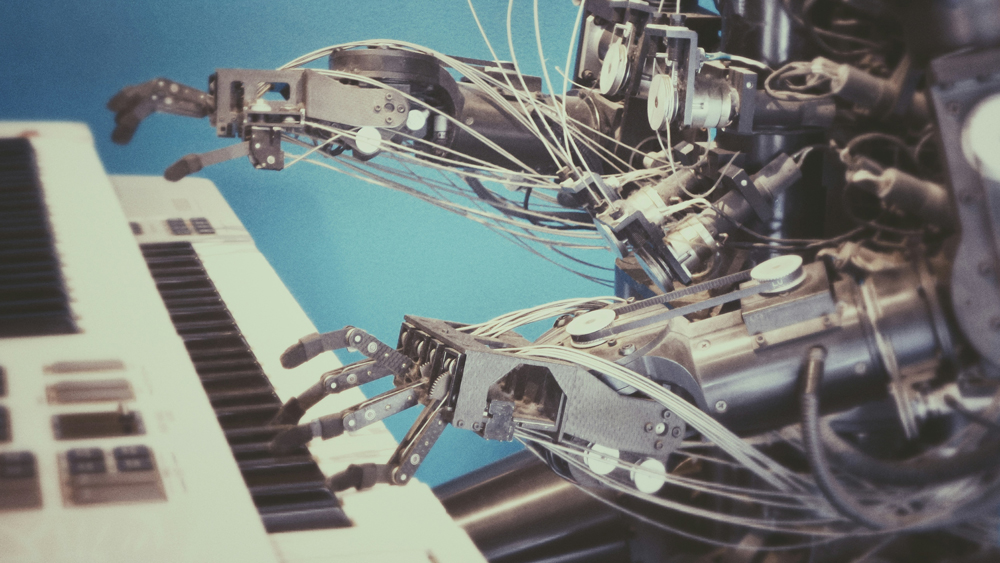

As the road map for AI adoption in a QMS continues to unfold, teams must stay informed about emerging technologies and trends. Photo by Possessed Photography on Unsplash

Historically, the sensitive nature of personal and company proprietary information held in life sciences quality management systems (QMS) has been a factor for quality management teams’ reluctance to adopt AI. Add to that the complex global regulatory environment and the penalties of noncompliance, and this disinclination increases as the quality management teams work to reduce risk.

|

ADVERTISEMENT |

However, as AI’s capabilities and benefits, along with technologies such as machine learning (ML), generative AI (GenAI), and large language models (LLM) become more compelling, quality management teams are considering them to improve productivity and efficiency, reduce errors and duplication of effort, and empower industry professionals in their day-to-day activities.

An initial resistance

Initially, quality management teams were reluctant to adopt AI due to concerns about handling sensitive personal information. Two significant factors contributed to this.

…

Comments

Human experience provides critical context that engines lack.

Human experience provides critical context that engines lack. Well said. When me moved from slide rules to calculators, a human still needed to be involved. AI/ML is a tool, like a calculator.

Add new comment